Kimi K2.5's Agent Swarm: 100 Parallel Sub-Agents, 1,500 Tool Calls, and a 4.5x Speedup

Moonshot AI's Kimi K2.5 doesn't run one agent that thinks really hard. It spawns up to 100 sub-agents that work simultaneously, coordinating across 1,500 tool calls, and finishes complex workflows up to 4.5 times faster than a single-agent setup. Released on January 27, 2026, it's one of the first models to bake parallel swarm orchestration directly into its training objective, and it forces a real architectural question for anyone building agent systems: when is a swarm worth the complexity?

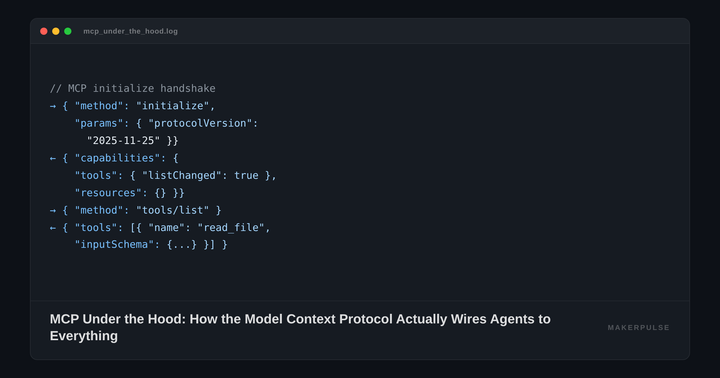

What K2.5 Actually Does

Kimi K2.5 is a 1 trillion parameter Mixture-of-Experts model that activates 32 billion parameters per request. It's built on the Kimi-K2 base with continual pretraining on roughly 15 trillion mixed visual and text tokens, giving it native multimodal capabilities. The weights are released under a modified MIT license on Hugging Face, with a commercial attribution clause that kicks in at 100 million MAU or $20 million in revenue.

But the headline feature is Agent Swarm. K2.5 ships with four operating modes: Instant (fast responses), Thinking (chain-of-thought reasoning), Agent (single-agent tool use), and Agent Swarm (parallel multi-agent execution, currently in beta). The jump from Agent to Agent Swarm is the interesting part.

In Agent Swarm mode, the model acts as an orchestrator. Given a complex task, it decomposes the work into parallelizable subtasks, dynamically instantiates sub-agents to handle each one, and coordinates their outputs. No predefined roles. No hand-wired pipelines. The model decides how many agents to spawn, what tools each one uses, and how to merge the results.

The numbers from Moonshot's internal evaluations: an 80% reduction in end-to-end runtime on complex workloads, with critical-path steps dropping by 3x to 4.5x versus single-agent execution. On BrowseComp, a benchmark that tests deep web research, Agent Swarm scored 78.4% compared to 60.6% for single-agent mode. That's a 29% improvement from parallelization alone.

How PARL Trains a Swarm

Training a model to orchestrate parallel agents is harder than it sounds. Moonshot developed Parallel-Agent Reinforcement Learning (PARL) to solve two specific failure modes.

The first is "serial collapse": the orchestrator learns to just run everything sequentially through one agent, ignoring its ability to parallelize. This happens because sequential execution is a simpler policy to learn, and standard RL rewards don't penalize it.

The second is "spurious parallelism": the orchestrator spawns agents that do meaningless work just to collect parallelism rewards.

PARL handles both with a staged reward function that combines three signals. An instantiation reward encourages the model to create sub-agents. A sub-agent finish rate prevents reward hacking by ensuring spawned agents actually complete useful work. A task-level outcome score measures whether the overall goal was achieved. Early in training, the rewards push harder on parallelism. Later stages shift the weight toward task success.

The result is an orchestrator that parallelizes when it helps and runs sequentially when it doesn't, without a human defining the decision boundary.

Single-Agent vs. Agent Swarm: The Practitioner Trade-off

If you're building agent systems today, you're choosing between two patterns. It's worth noting that xAI's Grok 4.20 shipped a different take on multi-agent architecture the same week — a 4-agent debate system that runs agents in parallel to reduce hallucinations rather than speed up task completion.

Single-agent architectures (the approach used by most Claude, GPT, and Gemini-based systems) run one model instance that reasons through a task step by step. The model plans, calls tools, evaluates results, and iterates. This is simpler to build, debug, and observe. When something goes wrong, you have one chain of thought to inspect. Costs are predictable. Latency scales linearly with task complexity.

Agent swarm architectures (what K2.5 implements) run many model instances simultaneously. The orchestrator splits the problem, delegates pieces, and assembles results. This is faster for parallelizable work, but harder to debug. If sub-agent 47 of 100 returns bad data, finding the error means tracing through concurrent execution logs. Costs multiply with the number of active agents. And not every task benefits from parallelism.

The honest split: if your workload is mostly sequential (write code, run tests, fix errors, repeat), a single-agent setup will be simpler and potentially cheaper. If your workload is naturally parallel (research 50 companies simultaneously, process 200 documents, compare data from dozens of sources), swarm architecture can cut wall-clock time dramatically.

K2.5's BrowseComp results illustrate this clearly. Web research is embarrassingly parallel: each source can be fetched and analyzed independently. That's exactly where a 4.5x speedup materializes. On SWE-Bench Verified (sequential coding tasks), K2.5 scores 76.8%, competitive with GPT-5.2 Codex's 80.0% and Claude 4.5 Opus's 80.9%, but without the swarm advantage. For the OSWorld visual control benchmark, Qwen 3.5 scores 62.2 on identical desktop tasks, a useful reference point when evaluating which model class fits your automation needs.

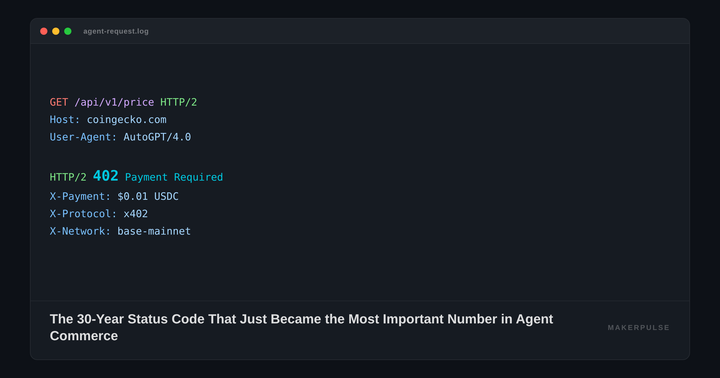

The Pricing Angle

K2.5's API costs $0.60 per million input tokens (cache miss) and $3.00 per million output tokens. Cache hits drop to $0.10 per million input tokens. That's roughly 8x cheaper than Claude Opus 4.5 on input and well below GPT-5.2. Our model cost map puts K2.5's per-run cost at $0.33 — 5x more expensive than Gemini Flash for comparable task quality.

But Agent Swarm multiplies token consumption. If your swarm spawns 20 sub-agents, each making 50 tool calls, you're burning through tokens at 20x the rate of a single agent. The wall-clock time savings are real, but the token cost per task could end up higher than a single-agent run on a more expensive model. Practitioners will need to benchmark their specific workloads to know which approach is cheaper in total.

The open-weight release changes this calculus for teams with GPU access. Qwen 3.5 released under Apache 2.0 the same month, creating genuine competition at the open-weight frontier tier — worth benchmarking both before committing to self-hosted infrastructure. At 32 billion activated parameters, K2.5 can run on consumer-grade multi-GPU setups with INT4 quantization. Self-hosting eliminates per-token costs entirely, making the swarm pattern economically viable for high-throughput workloads.

What's Missing

Agent Swarm is still in beta. Moonshot has been transparent about the failure modes that PARL was designed to address, but production reliability data from external teams is sparse. The 4.5x speedup comes from Moonshot's internal evaluations on specific benchmarks. Real-world tasks with messy dependencies between sub-tasks will see smaller gains.

Observability is the bigger gap. Debugging 100 concurrent agents requires tracing tools that most teams don't have. Moonshot hasn't shipped dedicated observability for Agent Swarm, and standard LLM tracing tools (LangSmith, Arize, Braintrust) weren't built for this pattern.

Enterprise control is another concern. Some organizations want to separate model training from orchestration logic. Baking the swarm orchestrator into the model itself means you can't swap out the coordination strategy without swapping the model. Teams that want flexibility in how they compose agents may prefer an external orchestration layer (LangGraph, CrewAI, Autogen) with a simpler model.

The Bottom Line

Kimi K2.5 is the strongest argument yet that agent architecture is moving toward parallelism. The PARL training approach solves real problems, the benchmarks show meaningful speedups on parallel-friendly tasks, and the open-weight release at $0.60/M input tokens gives practitioners an affordable way to experiment.

But swarm architecture isn't a universal upgrade over single-agent setups. It's a different tool for a different class of problems. If you're processing large batches of independent tasks and wall-clock time matters more than token efficiency, K2.5's Agent Swarm is worth testing. If you're building a coding assistant or a step-by-step workflow, a single-agent architecture will serve you better with less operational overhead.

The real shift isn't that swarms are "better." It's that parallel agent orchestration is now available as a built-in model capability rather than something you have to wire up yourself. That lowers the engineering cost of going parallel, and it will reshape how teams design agent systems.