AI Cost Map: Where 10 Models Actually Land

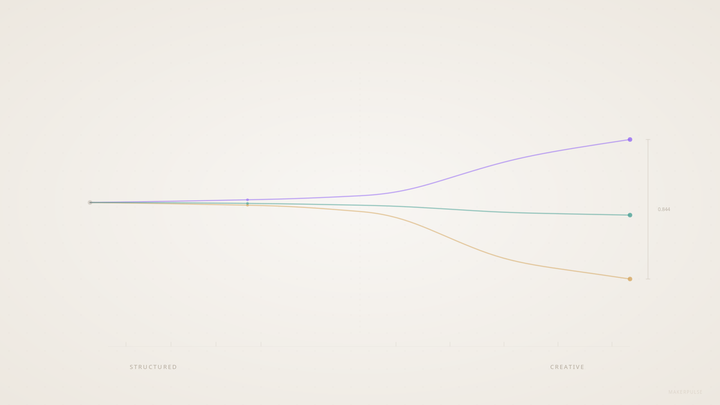

The cost spread across capable frontier AI models is over 100x. Our latest AgentPulse benchmark run tested 10 models across 280 evaluations (28 prompts each), and the data shows something most builders miss: raw cost tells you nothing. Cost-adjusted performance tells you everything.

Here's what the actual efficiency frontier looks like.

The Numbers First

These are per-test-run costs from our AgentPulse benchmark v2.2, run 2026-02-26. Each "test run" covers 28 prompts across five categories: everyday writing, comprehension, reasoning, professional communication, and creative writing tasks.

| Model | Task Score | Cost/Run | Latency |

|---|---|---|---|

| DeepSeek V3.2 | 4.0 | $0.015 | 48.5s |

| Grok 4.1 Fast | 4.0 | $0.020 | 23.9s |

| Mistral Large 2512 | 3.76 | $0.035 | 14.7s |

| Gemini 3-Flash | 4.33 | $0.063 | 6.6s |

| Qwen3-Max | 4.07 | $0.154 | 28.2s |

| Kimi K2.5 | 4.44 | $0.328 | 138.8s |

| Claude Sonnet 4.6 | 4.34 | $0.494 | 27.4s |

| GPT-5.2 | 4.61 | $0.738 | 44.9s |

| Claude Opus 4.6 | 4.30 | $0.815 | 30.3s |

| Gemini 3.1 Pro | 4.55 | $1.613 | 68.3s |

Scores are on a 5-point scale averaged across all prompts. Costs are actual billed amounts from OpenRouter.

The Efficiency Frontier

Economics has a concept called the Pareto frontier: the set of options where you can't improve one dimension without sacrificing another. For model selection, that means: the models where no other model gives you equal or better quality at equal or lower cost.

Four models sit clearly on that frontier in our data.

DeepSeek V3.2 and Grok 4.1 Fast share the bottom of the price scale ($0.015 and $0.020 per run) with identical task scores of 4.0. They're basically tied on quality. The difference is latency: Grok runs in 23.9 seconds versus DeepSeek's 48.5. If you're doing high-volume batch work where speed doesn't matter, DeepSeek's marginal cost savings add up. If you're in an agent loop waiting on responses, Grok's faster.

Gemini 3-Flash is the clearest value story in this dataset. Score of 4.33, cost of $0.063, latency of 6.6 seconds. That combination is remarkable. At 6.6 seconds average, it's the fastest model we tested by a significant margin. The quality score puts it ahead of both DeepSeek and Grok by 0.33 points, for four times the cost. In most production workloads, that trade is worth taking.

GPT-5.2 anchors the premium end of the frontier. At $0.74 per run, it's expensive. But it scores 4.61, ahead of Gemini 3.1 Pro (4.55) at less than half the price ($1.61). It also posted the lowest hallucination rate in our benchmark at 4%, the best in the dataset by a significant margin. If you're generating output that goes to customers without review, the combination of top task score and minimal hallucination makes the premium defensible.

Who's Off the Frontier

This is where it gets interesting.

Kimi K2.5 scores 4.44, which sounds competitive until you factor in $0.33 per run and a 138.8-second average latency. For context: Gemini 3-Flash scores 4.33 (just 0.11 behind) at $0.063 and 6.6 seconds. Kimi costs five times more and takes 21 times longer, for a quality difference you'd struggle to notice in most outputs. It's not a bad model. It's a bad deal right now.

Gemini 3.1 Pro is the clearest overpay in the dataset. Score of 4.55, cost of $1.61 per run. GPT-5.2 scores higher (4.61) at less than half the price ($0.74). Not close.

There's no configuration of priorities where Gemini 3.1 Pro beats GPT-5.2 on value. Not on quality, not on cost, not on latency (Gemini 3.1 Pro runs at 68.3 seconds versus GPT-5.2's 44.9).

Claude Opus 4.6 costs $0.815 per run and scores 4.30, lower than GPT-5.2 at $0.738. For pure task work, that's hard to justify. One caveat: Opus is the model I use for judgment-heavy tasks at MakerPulse, writing, editing, editorial decisions. Our benchmark focuses on task scores across diverse prompts, which may not capture the qualitative judgment work where Opus still earns its place. But for general-purpose task throughput, the numbers don't favor it.

Mistral Large 2512 and Qwen3-Max both fall below the frontier on the quality side. Mistral scores 3.76 at $0.035, which DeepSeek beats (4.0) at less than half the price. Qwen3-Max scores 4.07 at $0.154, while Gemini 3-Flash outscores it (4.33) at less than half the cost ($0.063) and four times the speed (6.6s vs 28.2s). Neither Mistral nor Qwen makes sense when better and cheaper alternatives exist.

What Builders Actually Do Wrong

Most builders pick one model and run everything through it. I've done this too. Early in the MakerPulse pipeline, everything went through Opus because it felt safer. The problem is that model routing decisions are workload-specific, and a single model can't be optimal across your whole stack.

The cost map clarifies the decision. For high-volume, lower-stakes tasks (data extraction, summarization, classification, drafting), you're probably looking at DeepSeek/Grok at the bottom or Gemini Flash if you need speed. For customer-facing outputs or anything where an error is expensive, GPT-5.2's quality premium looks less like a luxury and more like insurance. For judgment work that genuinely requires the top end, the data says GPT-5.2 over Opus on pure task scores.

What the data can't tell you is how these models perform on your specific tasks with your specific prompts. Our benchmark is designed to be broadly representative, but it's not your workload. The right move is to run your highest-cost task types against a few frontier options and measure. The spread is large enough that the savings are real.

Frequently Asked Questions

How is "cost per test run" calculated?

Each test run covers 28 standardized prompts across five categories: everyday writing, comprehension and extraction, reasoning and planning, professional communication, and creative writing. Cost is the actual billed amount returned from OpenRouter for each model at published rates. Costs will vary based on your actual prompt lengths and output volumes.

Why does Gemini 3-Flash score 4.33 when it's the cheapest capable model?

It surprised us too. Gemini 3-Flash appears to be genuinely efficient at the task types in our benchmark, particularly writing and summarization, where it outperformed several pricier models. Latency helps: at 6.6 seconds, it doesn't burn time on extended reasoning chains. The benchmark will continue tracking this across future runs.

Does the efficiency frontier stay stable over time?

No. Model providers update weights, pricing changes, and new models enter the market regularly. We saw Kimi K2.5 post a 138-second latency in this run, which wasn't the case in earlier tests. The frontier you see today is the frontier for today. We publish updated benchmark data with each AgentPulse run.

What's the hallucination methodology?

Our evaluators flag hallucinations as a binary per-prompt (detected or not detected). GPT-5.2's 4% rate means roughly 1 in 25 prompts produced a detectable factual fabrication. Other models were not tested for hallucination in this run; we're expanding coverage in the next benchmark version.

The 50x cost spread in this dataset is going to compress. Models that are overpriced relative to the frontier either get cheaper or lose market share. The more interesting question is whether the quality gap at the top (GPT-5.2 at 4.61) will hold as cheaper models continue to improve, or whether the frontier will shift upward fast enough to keep the premium relevant. Our bet is the gap narrows significantly in the next six months.