Your AI Writes Well. Everyone Can Tell.

Llama 4 Maverick scores 3.48 on task quality in our AgentPulse v2.2 benchmark. Respectable. Then you ask it to write something creative, and the score drops to 2.02. A 42% collapse. Same model, same evaluation, different kind of work.

It's not alone. We tested 15 models across 28 prompts with 3 runs each and median aggregation. The pattern is consistent: most models are significantly worse at creative output than structured tasks. Some are dramatically worse.

| Model | Task Score | Creative Score | Drop |

|---|---|---|---|

| GPT-5.3-Codex | 4.64 | 4.11 | -11% |

| Claude Sonnet 4.6 | 4.34 | 4.01 | -8% |

| Claude Opus 4.6 | 4.39 | 3.96 | -10% |

| Kimi K2.5 | 4.53 | 3.79 | -16% |

| Gemini 3.1 Pro | 4.54 | 3.45 | -24% |

| DeepSeek R1 | 3.93 | 2.89 | -26% |

| Qwen3-Max | 4.15 | 2.89 | -30% |

| Llama 4 Maverick | 3.48 | 2.02 | -42% |

The smallest gaps belong to frontier general models: Codex, Claude, Kimi. The largest gaps belong to reasoning-optimized models (DeepSeek R1), general models with weak creative output (Qwen3-Max), and open-source entries (Maverick). The more a model has been tuned for chain-of-thought reasoning, the more it pays in creative output quality. DeepSeek R1 literally spends its token budget thinking rather than writing. Useful for logic problems. Devastating for prose.

"Creative" doesn't mean fiction

Here's where this gets practical. When people hear "creative writing," they picture short stories and poetry. But for practitioners, creative writing is most of the writing you actually do.

Investor updates. Product launch emails. Proposals that need to convince someone. Documentation that needs personality. Sales copy that shouldn't read like a template. Blog posts. Newsletter intros. Slack messages to your team that need to land with the right tone.

All of this is writing where voice matters, where specificity matters, where sounding like a human with opinions is the whole point. And all of it falls in the gap between task score and creative score.

If you're using DeepSeek R1 or Qwen3-Max to draft your investor update, you're getting a 26-30% quality penalty compared to what those same models produce on structured tasks. You're getting the worst of both worlds: output that reads like AI and isn't even good AI.

The harder problem

So use GPT-5.3-Codex. It scores 4.11 on creative, only an 11% drop from task. Problem solved.

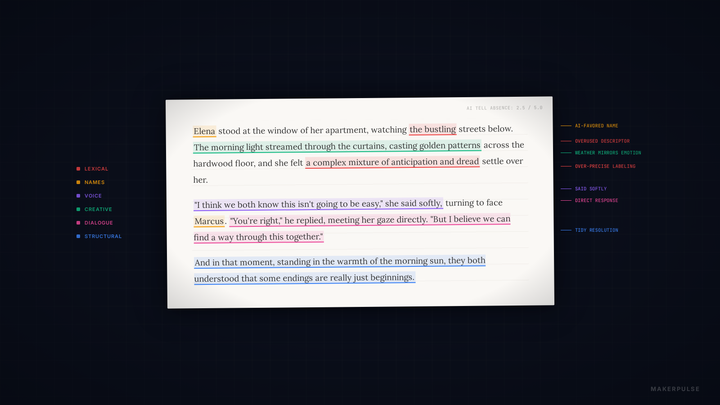

Not quite. We published a detailed analysis of AI writing tells two days ago using the same benchmark data. The finding: even the top creative scorers produce writing that's detectable as AI. GPT-5.3-Codex has the highest average tell score (3.76 out of 5) but only passes the tell check on 1 out of 7 creative prompts. 14% pass rate.

GPT-5.2, with its 3.87 creative composite, scores zero passes. Beautiful writing that screams "AI" every time. The hedging, the over-structured paragraphs, the emotional flattening, the characters named Elena who speak in perfect sentences. It all compounds. No single tell is fatal. Dozens of subtle patterns together are.

A high creative score means better starting material. It does not mean finished product. The gap between "writes well" and "sounds human" is the gap that matters most, and no model closes it on its own.

The actual creative gap

The real divide isn't between GPT-5.3-Codex and DeepSeek R1. It's between people who accept AI output and people who work with it.

"Write me a blog post about our product launch" will produce detectable AI writing from every model in this dataset. Every single one. The tells are baked into how these models learned to write. They trained on patterns, and they reproduce patterns. That's what patterns do.

What changes the output is friction. Giving the model examples of your actual writing and telling it to match the rhythm. Rejecting the first draft. Pushing back when it hedges. Telling it to cut the three-act structure. Asking why it named the character Elara again. Feeding it the messy, imperfect, opinionated voice that you actually write in, and refusing to let it smooth the edges.

That's not prompting. That's editing. That's collaboration. And it's the only way to get output that doesn't trigger the tells.

The models that score highest on creative (Codex at 4.11, Sonnet at 4.01, Opus at 3.96) give you better raw material to start that process with. You're iterating from a stronger baseline. But if you stop at the first draft, you're publishing something that anyone who reads AI output regularly will clock in two paragraphs.

The routing decision

Here's the practical framework:

Structured work (code, data analysis, extraction, summarization, classification): use whatever scores highest on task. Reasoning models are fine here. DeepSeek R1 at 3.93 task score and $0.30 per run is a strong choice for work where voice doesn't matter.

Writing where voice matters (anything a human will read and judge): use a frontier general model. The 8-11% creative penalty from Codex or Claude is manageable. Kimi's 16% gap is larger but still workable. The 26-42% penalty from reasoning models is not.

Writing where voice matters and detectability matters: use a frontier general model and then actually work with it. Feed it your style. Reject the defaults. Edit the output. The model picks the direction. You drive.

The creative gap is real, and model choice is the first half of closing it. The second half is on you.

Data from AgentPulse v2.2. 15 models, 28 prompts, 3 runs per model, median aggregation. Full methodology and raw data at data.makerpulse.ai. Related: Every AI Writes Like an AI. Some Just Hide It Better.