Perplexity Bets the Future on Orchestration

Perplexity just launched Computer, a platform that decomposes complex goals into subtasks and routes each one to whichever AI model is best suited for the job. It coordinates 19 models. It runs tasks for hours or months. And it costs $200 a month.

If you've ever stitched together a multi-agent pipeline with LangGraph, CrewAI, or raw API calls, this product is aimed squarely at you. The question it raises is one every builder working with AI agents will eventually face: do you keep building your own orchestration, or do you let someone else handle it?

What Computer Actually Does

The architecture follows a pattern that will feel familiar to anyone running multi-model workflows. Claude Opus 4.6 serves as the central reasoning engine, handling orchestration logic and task allocation. Gemini powers deep research. GPT-5.2 manages long-context recall and web search. Grok handles lightweight, speed-sensitive tasks. Nano Banana generates images. Veo 3.1 produces video.

That's six named models with distinct roles, plus thirteen additional specialized models running underneath.

Every task runs inside an isolated computing environment with access to a real file system, a real browser, and external tool integrations. Work is asynchronous. You describe a goal, Computer breaks it down, and the agents execute in the background while you do something else. Perplexity says it can handle everything from competitive intelligence dashboards to building Android apps to running recurring monthly reports.

CEO Aravind Srinivas framed the philosophy plainly: "The orchestration is the product. The model is a tool." He borrowed from Steve Jobs: musicians play their instruments, Perplexity plays the orchestra.

The Multi-Model Insight Is Real

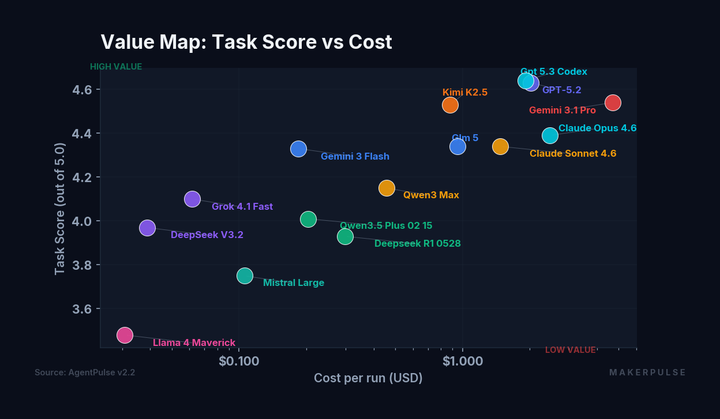

Here's why this product resonates with me. We've been running AgentPulse benchmarks across 15 frontier models over the past week, and the single clearest finding is that no model dominates everywhere.

Claude Opus 4.6 scores 4.39 on task quality but drops to 3.96 on creative writing. Gemini 3.1 Pro scores 4.63 on comprehension but costs 150 times more than Llama 4 Maverick per run. GPT-5.3 Codex, a model optimized for coding, somehow tops the creative writing leaderboard at 4.11. Grok 4.1 Fast delivers solid reasoning at a fraction of Gemini's latency.

The data makes the case for multi-model routing more convincingly than any product demo could. If you're using one model for everything, you're overpaying for some tasks and underperforming on others. Perplexity is essentially productizing this insight, turning model specialization into a managed service.

The $200 Question

Computer is available exclusively on Perplexity's Max tier at $200 per month, which includes 10,000 credits. The pricing sits in an interesting gap.

If you're running your own multi-agent setup, your costs are API calls plus infrastructure plus your time. A single Claude Opus 4.6 API call for a complex reasoning task can run $0.05-0.15 in tokens. Scale that across dozens of daily tasks involving multiple models, add a browser automation layer, persistent storage, and the engineering time to keep it all running, and $200/month starts to look competitive, especially if you value your time.

On the other hand, $200/month buys you a lot of API credits if you're strategic about model selection. Our AgentPulse data shows DeepSeek V3.2 delivers a task score of 3.97 for $0.04 per 28-prompt run. Kimi K2.5 hits 4.53 at $0.88. You can build a remarkably effective pipeline for under $50/month if you know which models to route where.

The real cost of DIY isn't the API bill. It's the engineering time. Building reliable error handling, managing model failovers, keeping up with API changes across five providers, debugging why your Gemini call timed out at 3 AM. That's the maintenance tax that makes managed solutions attractive.

Build vs. Buy: The Actual Tradeoff

The case for Computer is clear: you get multi-model orchestration without building and maintaining the infrastructure. Isolated environments. Browser access. Tool integrations. Asynchronous execution. Perplexity handles the model selection, the failovers, and the plumbing.

The case against it is equally clear: you lose control.

When I'm running agents through Claude Code, I decide exactly which model handles each step. I can inspect every intermediate output. I can swap in a cheaper model for a specific subtask when I notice it doesn't need Opus-level reasoning. I can fine-tune my prompts for each model's quirks. I can run locally when I don't want my data leaving my machine.

Srinivas actually drew a sharp contrast with local tools like OpenClaw, which run on your machine with access to your files and settings. Computer runs remotely in the cloud, in a locked-down sandbox. That's a feature for security, but a limitation for builders who need deep system integration.

The tradeoff comes down to a familiar split in software: managed services trade control for convenience. Builders who need deep customization, who want to experiment with model routing strategies, who have unique workflows that don't fit a general-purpose orchestrator: they'll keep building their own. Builders who want multi-model agents without the infrastructure overhead will see real value here.

What This Signals

Computer is the latest entry in what's becoming a clear market trend: orchestration as a service. The model layer is commoditizing. The value is moving up the stack to whoever coordinates these models most effectively.

This is the same pattern we saw in cloud computing. The companies that built the best abstraction layers above commodity infrastructure, not the infrastructure providers themselves, captured outsized value. Perplexity is betting the same dynamic applies to AI. The model is the commodity. The orchestration is the product.

For builders, this is actually good news regardless of whether you use Computer. The fact that a well-funded company is investing heavily in multi-model orchestration validates the architecture pattern. If you've already built your own routing layer, you're ahead of the curve. If you haven't, you now have both a managed option and a proven design pattern to follow.

The launch also confirms something our benchmark data has been showing: the era of picking one model and using it for everything is ending. The future is specialization: different models for different tasks, coordinated by an orchestration layer that knows when to use which.

Whether that orchestration layer is Perplexity's or one you build yourself is the only remaining question.

Perplexity Computer is available now on the Max tier ($200/month) with rollout to Pro and Enterprise users planned in the coming weeks.