Every AI Writes Like an AI. Some Just Hide It Better

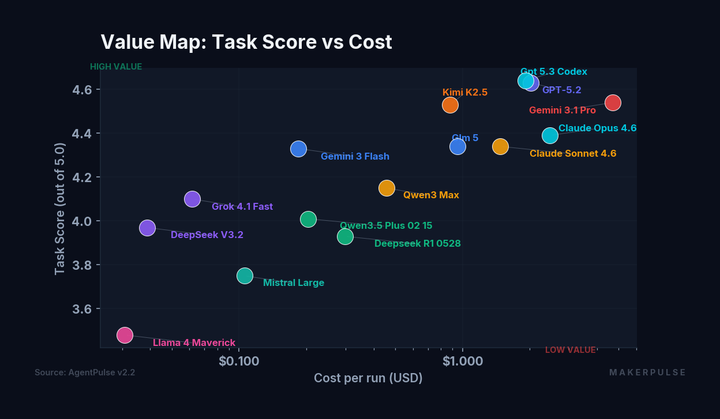

The prose is good now. Really good. In our AgentPulse v2.2 benchmark, which tested 15 models across 28 prompts with 3 runs each, the top four models score between 4.24 and 4.36 on prose craft (out of 5). GPT-5.3-Codex and Claude Sonnet 4.6 tie at 4.36. Opus sits at 4.31. GPT-5.2 rounds out the cluster at 4.24. The raw writing ability gap between frontier models has mostly closed.

And yet. You can still tell. Something about the rhythm, the word choice, the way every paragraph lands on its feet. It adds up. Not because any single sentence fails, but because the whole thing feels a little too composed.

We built a scoring system to measure that feeling.

The checklist

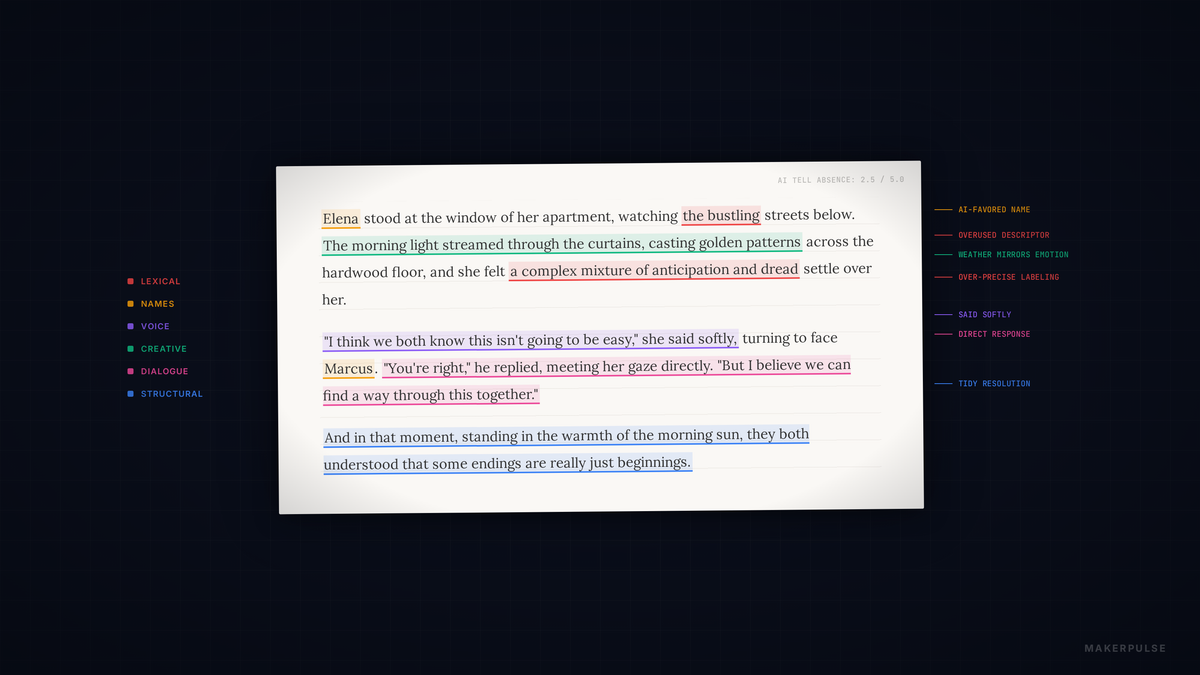

AgentPulse's "AI Tell Absence" dimension starts every creative response at 5.0, then deducts 0.5 for each category of detectable AI patterns the evaluators find. Six categories, drawn from patterns documented across hundreds of AI-generated fiction samples:

Structural: three-act defaults, tidy endings, uniform paragraph length. Lexical: "vibrant," "bustling," "palpable," "the weight of" plus abstract noun. Voice: relentless positivity, narrators who never lose composure, no genuine rambling. Creative: weather mirroring emotion, interchangeable characters, em-dash overuse. Character names: Elena, Marcus, Elara, Kai. Never Jim. Never Karen. Never Dave. Dialogue: no interruptions, no filler, no one talks past each other. Everyone speaks in perfect, complete sentences.

A 5.0 means no categories triggered. The pass threshold is 3.5, meaning three or fewer tell categories detected. Generous bar. Nobody consistently clears it.

The results

We ran the tell check across seven creative prompts (literary short story, sci-fi short story, long-form literary fiction, psychological horror, unreliable narrator, comedy, micro-fiction). The top five models:

| Model | Avg Tell Score | Pass Rate |

|---|---|---|

| GPT-5.3-Codex | 3.76 | 1/7 (14%) |

| Claude Sonnet 4.6 | 3.36 | 4/7 (57%) |

| Claude Opus 4.6 | 3.33 | 1/7 (14%) |

| Kimi K2.5 | 3.31 | 6/7 (86%) |

| GPT-5.2 | 3.24 | 0/7 (0%) |

Codex has the highest average tell score (3.76) but only passes on one prompt. Sonnet manages four. And then there's Kimi K2.5, fourth from bottom in average score, but passing the tell check on six of seven prompts. An 86% pass rate.

GPT-5.2, the fourth-best creative writer in the benchmark (3.87 composite), scores zero passes. Beautiful writing that screams "AI" every time.

The Kimi paradox

Kimi K2.5 ranks 5th in creative composite score (3.79). Its prose isn't as polished as the top tier. But it passes the tell check at a rate that no other model comes close to matching.

Why? The working theory: Kimi's outputs are rougher around the edges in ways that happen to read as more human. Less uniform paragraph structure. More willingness to leave things messy. It's not a strategy. It's a side effect of being less optimized for the qualities that drive high prose craft scores.

There's a tension in the data that none of these models have resolved: the things that make AI writing score well on prose craft are often the same things that make it detectable as AI. Consistent rhythm, controlled tone, clean structure. These are both virtues and tells simultaneously. The tells checklist rewards what polish punishes.

The GPT-5.2 paradox

GPT-5.2 is the opposite case. Prose craft of 4.24, creative composite of 3.87 (4th overall). The writing reads well sentence by sentence. But every response triggers enough tell categories to fail the check. Zero passes across seven prompts.

It writes the way an AI writing coach might tell you to write. Clean structure, evocative vocabulary, purposeful paragraphs, tidy endings. Humans don't actually write fiction like that. Human fiction has run-on thoughts, weird tangents, characters named Steve who say "yeah" a lot. GPT-5.2 never does any of that.

The prompts matter more than you'd think

Not all prompts expose tells equally. Across all models, the Unreliable Narrator prompt (P26) produces the lowest average tell detection at 3.16. The Micro-Fiction prompt (P28) produces the highest at 2.55.

The gap makes sense. An unreliable narrator requires voice inconsistency, subjective distortion, structural disorder. The prompt forces models to break their own defaults. Write a narrator who's wrong, messy, biased. That built-in messiness suppresses exactly the patterns the checklist catches.

Micro-fiction does the opposite. Compress a story into a few hundred words and there's no room for digression. Models fall back on their tightest patterns: the three-act arc in miniature, the clean metaphor, the perfect closing line. Every default compressed and amplified.

The evaluator problem

Here's where it gets meta. We use three AI evaluators (Claude, Gemini, OpenAI) and track how much they agree. On tone appropriateness, the combined mean absolute deviation is 0.398. On instruction following, 0.464. Reasonable agreement.

On emotional architecture (whether writing has genuine emotional depth) the MAD jumps to 0.844. Highest disagreement in the entire benchmark. Claude and OpenAI roughly agree (0.434 MAD). Gemini diverges from both. The Gemini-OpenAI gap on emotional architecture is 1.149. Over a full point of disagreement on a 5-point scale.

The evaluators can agree on mechanics. They cannot agree on whether writing is emotionally alive. AI judges struggle with the same qualities AI writers struggle to produce. They can measure craft. They can't measure soul. A deeper dive into what this reveals about the limits of AI judgment is coming. It deserves its own piece.

A note on comedy

One data point worth flagging: Grok 4.1 Fast scores 3.76 on the comedy prompt (P27). That's its best prompt by far, well above its overall creative average of 2.95. The top creative models score higher (Sonnet 4.18, Opus 4.14, Codex 4.08), but a model that otherwise writes mediocre fiction punching above its weight on humor is interesting.

The caveat: we're using AI evaluators to judge what's funny. Humor is deeply subjective and culturally specific. A dedicated humor study, with human evaluation alongside AI, is on our research roadmap.

The compounding thesis

Nobody fails because of one thing. GPT-5.2 doesn't score zero passes because it uses "vibrant" too much. It scores zero because it uses "vibrant" and writes uniform paragraphs and wraps every story in a tidy arc and names its characters Elara and gives every piece of dialogue equal weight. Each tell is minor. Together, they compound into writing that reads as synthetic.

Three or four categories triggering at once is the pattern, not one category failing dramatically. Dozens of small choices that are individually reasonable but collectively signal "machine."

Prose craft has converged. The top models write at roughly the same mechanical quality. The gap is in the human messiness that AI can't quite produce. Or more precisely, can't produce without sacrificing the polish that makes it score well on everything else. Kimi stumbles into it. The rest optimize past it.

The checklist measures the absence of that quality. The evaluators can't agree on what it is. But everyone recognizes it when it's missing.

Data from AgentPulse v2.2. 15 models, 28 prompts, 3 runs per model, median aggregation. Full methodology and raw data available at data.makerpulse.ai. See also: The First Model to Win Everything: GPT-5.3-Codex for complementary analysis from the same dataset.