AI Can't Agree on What Good Writing Is

AI Can't Agree on What Good Writing Is

We use three independent AI evaluators in the AgentPulse v2.2 benchmark: Claude Opus 4.6, Gemini 3.1 Pro, and GPT-5.2. Different providers, blinded evaluation, identical rubrics. They grade 15 models across 28 prompts with 3 runs each.

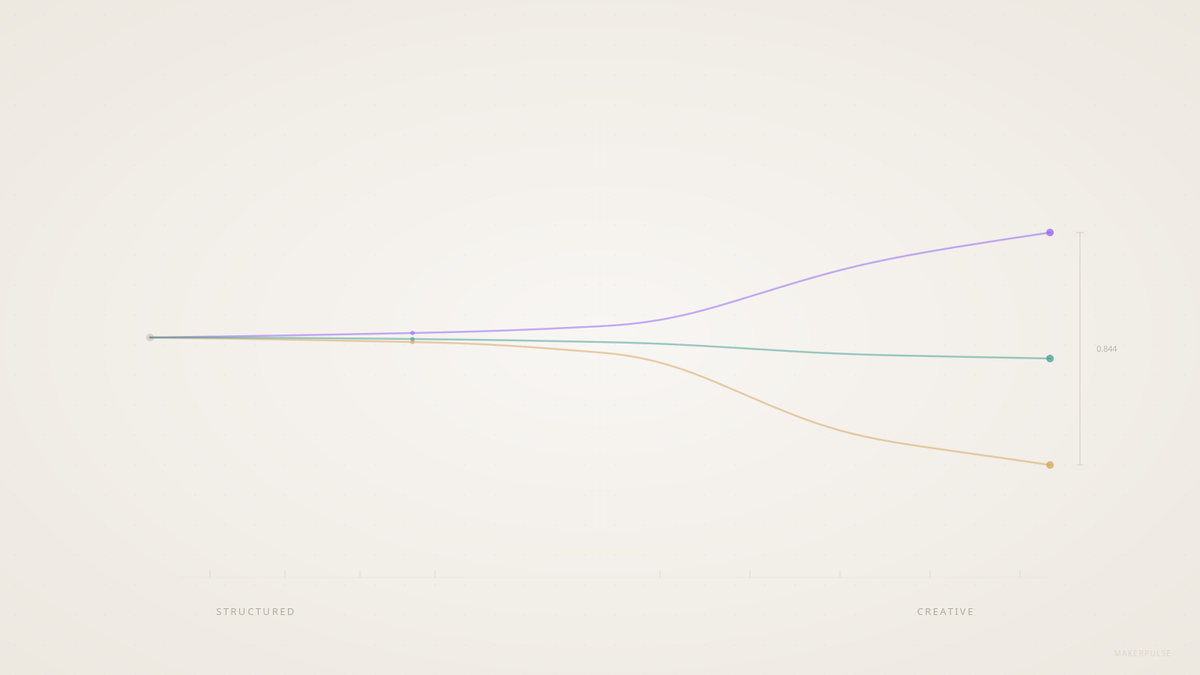

On structured tasks, they mostly agree. On creative writing, they fight.

The numbers

We measure evaluator agreement using mean absolute deviation (MAD) across all three pairwise combinations. A MAD of 0.4 means evaluators are typically less than half a point apart on a 5-point scale. A MAD of 0.8 means they're almost a full point apart.

| Dimension | MAD | Agreement |

|---|---|---|

| Tone appropriateness | 0.398 | Strong |

| Instruction following | 0.464 | Strong |

| Completeness | 0.478 | Strong |

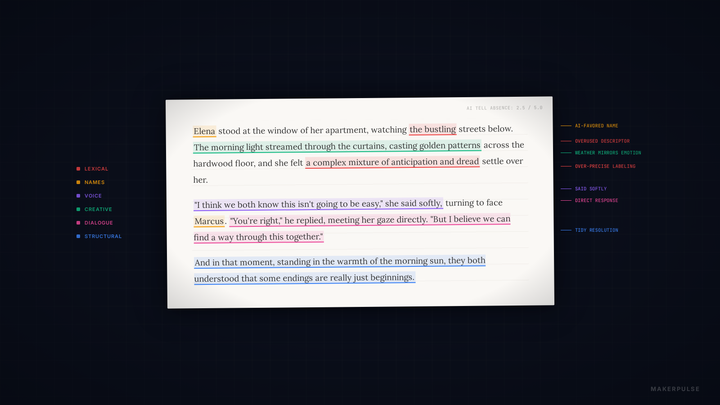

| AI tell absence | 0.492 | Decent |

| Prose craft | 0.638 | Moderate |

| Character & dialogue | 0.703 | Weak |

| Imagination & risk | 0.716 | Weak |

| Accuracy | 0.734 | Weak |

| Emotional architecture | 0.844 | Poor |

Read that table from top to bottom. The pattern is clean: the more a dimension requires understanding human experience, the less three frontier AI models can agree on how to score it.

Tone appropriateness (0.398) is basically "did the email sound professional?" They nail that. Instruction following (0.464) is "did you do what was asked?" No trouble. These are pattern-matching problems. AI is good at pattern-matching.

Emotional architecture (0.844) is "did this story make you feel something, and was that feeling earned through craft rather than declared through exposition?" Nearly a full point of disagreement. These evaluators are reading the same story and coming back with fundamentally different scores.

Not all evaluators are equal

The three pairwise disagreement rates tell their own story:

- Claude-OpenAI: 9.2% disagreement rate (Pearson r = 0.72)

- Claude-Gemini: 14.1% (r = 0.77)

- Gemini-OpenAI: 22.0% (r = 0.64)

Gemini and GPT-5.2 disagree on more than one in five creative writing scores. By research standards, a Pearson correlation of 0.64 is borderline. If these were human raters on an academic study, reviewers would ask questions.

There's also a self-bias angle. Claude scores its own provider's models 0.185 points higher than the other evaluators do. Gemini gives Google models a 0.159 boost. But OpenAI is actually harsher on its own models by 0.189 points. The blinding protocol prevents brand bias (evaluators never see model names), but style preferences still leak through.

Why accuracy is surprisingly contentious

You'd expect accuracy to land near the top of the agreement table. Facts are facts. But accuracy's MAD is 0.734, worse than prose craft.

The reason: our accuracy dimension covers two different things depending on the prompt. For extraction tasks with source material, it measures factual fidelity. For open-ended prompts, it measures analytical soundness. "Did you get the facts right?" is objective. "Is your analysis of this ethical dilemma sound?" is not. Same dimension label, very different evaluation problems.

This is one of those things you only discover by measuring evaluator agreement. The dimension looked clean in the rubric. The data said otherwise.

This isn't a benchmark problem

If three frontier AI models can't converge on what makes writing emotionally resonant, that has implications far beyond our benchmark.

Every company using AI to evaluate, filter, or rank creative content has this problem. AI-powered writing assistants that score your drafts? They're scoring against standards that other AI models would disagree with 15-22% of the time. Content platforms using LLMs to assess quality? The "quality" they're measuring shifts depending on which model they chose as their judge.

The disagreement isn't random noise. It's structural. Each model has been trained on different data, with different reward signals, by different teams with different ideas about what "good writing" looks like. When the task is mechanical, those differences don't matter. When the task requires understanding what a reader might feel, the differences surface immediately.

What I take away from this

We've now published three articles using AgentPulse creative data in a row. The first showed that AI writing is detectable even when it's good. The second showed that creative quality is where models drop hardest. This one shows that AI can't even agree on how to measure creative quality.

The thread connects: AI is still far from replicating human emotion. The evaluator disagreement isn't a measurement flaw. It's evidence that these models don't understand what makes creative writing land with a reader. They can approximate structure. They can identify whether instructions were followed. But the thing that separates competent writing from writing that makes you feel something? That's not a pattern-matching problem. It's a human one.

AI is a creative accelerator. I use it constantly. But every time I look at this data, it reinforces something I keep coming back to: without a human actually reading the output and reacting to it as a human, the AI is guessing. And three different guesses diverge by a full point on a five-point scale.

Good writing still needs people. Not because AI can't help. It can. But because the part of writing that matters most is the part AI understands least.